Executive Overview

Executive Overview

By implementing Application Data Management (ADM) solutions, Organizations gain the full benefits of master data management (MDM) concepts to govern their data better while avoiding generic MDM tools' complexity and overheads.

By producing high-quality data, organizations are on a course to becoming a Zero Latency Enterprise. It helps organizations reap better ROI from their execution systems such as ERP.

Key Challenges

Organizations wishing to transform their operations to achieve a Zero-Latency Enterprise (ZLE)[1] face several challenges, including:

-

Too many disparate business apps are operating as independent silos, hindering the seamless flow of Information

-

Not treating Data as a first-class Enterprise asset - Leading to poor quality of master data used in business processes

These cause undue delays and repeated rework, lengthening the response times for business decision-making.

Organizations wishing to improve their business operations to become more agile and responsive to rapidly changing external environments must seriously adopt a zero-latency enterprise's principles.

The Zero Latency Enterprise (ZLE)

"The big never eat the small - the fast eat the slow"

-Jason Jennings and Laurence Haughton

This quote aptly illustrates the paradigm shift in competitive dynamics in today's business environment. Conventional wisdom tells us that large companies will succeed in their markets, but that is no longer relevant today for many industries. At a rapid time of technological innovation and customer expectations for highly personalized service on demand, it is impossible to ignore the importance of time-based competition. Business leaders across industries acknowledge that speed is key to gaining a competitive advantage in their businesses. These leaders turn to Information Technology's potential to automate and streamline their business operations and transform them from functional silos into finely-tuned reusable business capabilities.

The IT community has offered to support these business initiatives with various solutions and infrastructure offerings in tune with the changing business needs. However, MDM vendors, employing confusing buzzwords and acronyms to highlight their competitive strengths, block the outcome's path. Real-time Enterprise, Adaptive Enterprise, Agile Enterprise, Event-driven Apps, Zero-Latency Enterprise – over the last couple of decades, there has been no dearth of new terms and acronyms used in the business press to propagate the message of the critical importance of being able to sense relevant events and respond appropriately at the right time.

The notion of latency in business terms is an important one to understand and act. In business terms, latency is between a business event and its results for decision-making or further downstream transactions. In a Zero Latency Enterprise (ZLE), such time lag is zero. In other words, current Information is immediately available to all parts of the company where it is needed.

From an IT standpoint, you enable a Zero Latency Enterprise (ZLE) by integrating all business applications in real-time.

-

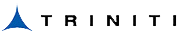

For organizations that successfully manage this transformation into a ZLE, the promised benefits are many. Easier integration of acquisitions through a standard set of core capabilities

-

Elimination of errors and shortening of response cycle through automated processes

-

Increased regulatory compliance: ability to rapidly analyze and respond appropriately to changing Government policies and rules

-

Enhanced business model innovation capability helping to react to new, emerging business needs quickly

-

A positive impact on both top and bottom lines resulting in increased shareholder value and market capitalization

Business Executives tasked with the responsibility of laying the blueprint for a transformation towards such agile enterprises face several important questions:

-

What constitutes the complete set of components of the IT solution and their inter-relationships

-

What parts of the current IT environment need to be part of the overall solution?

-

How to evaluate the competing claims of vendors and make a buying decision?

-

What milestones to plan for as part of the project

Getting the right answers to these questions takes up a considerable part of the planning exercise.

While these are crucial aspects to consider, savvy business executives combine both a big picture view with attention to low-level details, similar to experienced building architects. They understand that while technology is a crucial enabler for change, what powers this is the availability of high-fidelity representations of business objects, along with unambiguous semantics that explains these data classes' meanings, their attributes, and their relationships.

Don’t Forget the Data!

Without over-emphasizing the obvious, it is quite clear that numerous business initiatives rely on the presence of high-quality master data to supply reliable, trustworthy facts to support making critical decisions. As an example, consider the following business programs:

-

Rationalize the vendor base through Enterprise Spend Management or Strategic sourcing

-

Minimize inventory, improve profitability and visibility by focusing on parts reuse

-

Integrate Mergers & Acquisitions efficiently and effectively through synergies to be acquired by consolidating operations, supply chains, and product lines

When master data is inaccurate, incomplete, out-of-date, or duplicated, business processes magnify and propagate these data fallacies into other Organization components.

The latency concept applies to the abstract domain of information supply chains and the physical supply chains that handle the flow of goods and materials for manufacturing operations. The lifecycle management of data within the Organization is as relevant to eliminating latency and achieving a ZLE.

For a ZLE, closed-loop operations imply eliminating the latency between transactional and analytical activities

In closed-loop business operations, transactional data captured in backend execution systems such as ERP/CRM shared in near-realtime with downstream Business Intelligence (BI) systems. Since executive decision-making effectiveness depends on the quality of data available, well-managed data assets provide a competitive advantage. Flawed Master Data impacts the bottom line.

Poor Master Data Impacts the Bottom Line

Without over-emphasizing the obvious, it is quite clear that numerous business initiatives rely on the presence of high-quality master data to supply reliable, trustworthy facts to support making key decisions. But master data handling in many organizations is in a state of mess. Due to many IT systems and applications' siloed nature, master data gets redundantly stored in multiple places. It results in disparate data nomenclatures for the same entity, differing data structures, definitions, and inconsistent use of rules to enforce business constraints. Poor master data manifests in various dimensions:

-

Inaccurate

-

Logically invalid

-

Incomplete

-

Duplicate records pertaining to the same real-world entity

-

Out-of-date or not current/ irrelevant

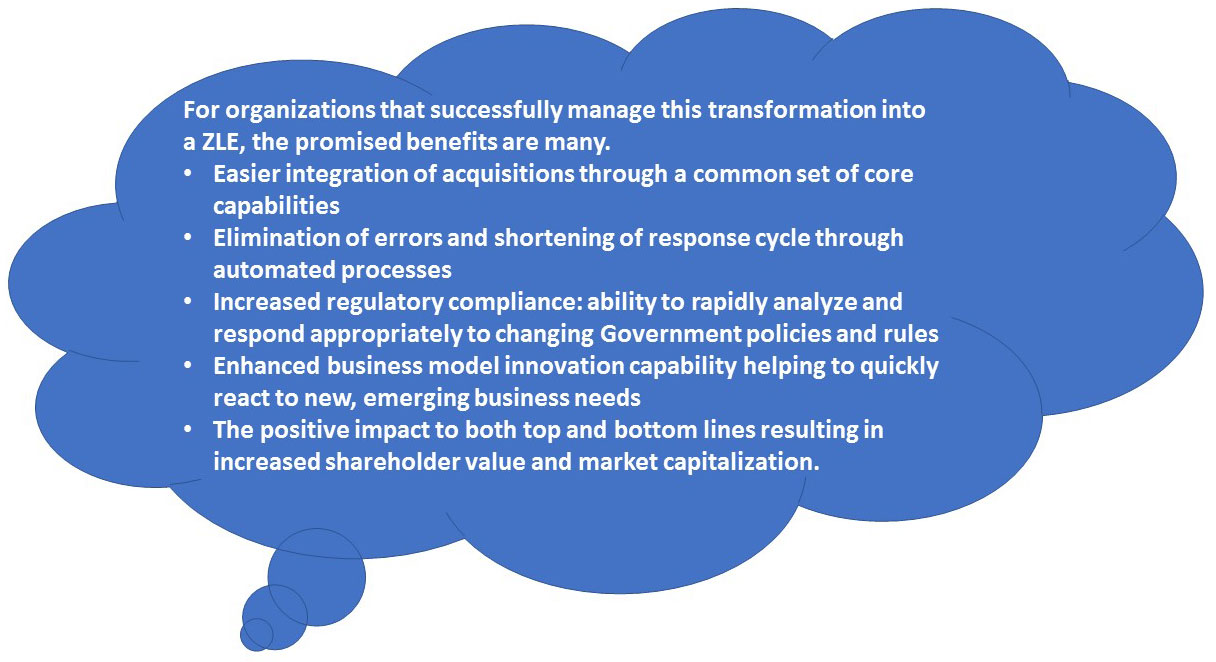

When business processes use such error-ridden data to run their transactions, it magnifies and propagates these data deficiencies to other departments. It has a profound impact on the company's operational and financial results. Some or all of the following adverse outcomes result:

-

Executives stop using operational BI reports voiding ROI assumptions of BI initiatives

-

Executives forced to manage more by instinct and gut feel rather than using facts, risking making poor decisions

-

Vendors may capitalize on charging more for goods and services

-

Increased costs for fulfilling customer demand on account of wrong shipments

-

High inventory levels because of poor supply chain planning

-

Increased ghost demand from customers because of lower fulfillment expectancy

-

In extreme cases, shareholders lose confidence, and market capitalization plummets

Master Data Management (MDM)

Due to growing awareness of the adverse outcomes of bad master data quality and its impact on the business bottom line, many companies have adopted a more disciplined approach to managing their information assets by employing solutions under the label of Master Data Management (MDM). There are three distinct perspectives to understand when considering an evaluation of potential MDM solutions:

-

First and foremost, master data management is a business concern. Therefore, business leaders should drive with a strategic, corporate-wide focus

-

MDM is primarily a discipline with a clear set of concepts to educate users on how to govern. Its scope is within and outside the Enterprise

-

MDM is also a tool that offers features to automate the processes involved in the lifecycle management of master data

What is the Ideal MDM solution?

An ideal MDM solution would be one where:

-

The MDM system owns 100% authorship of all the relevant data attributes needed by all the member applications in the Organization

-

Master data is available to all consumers instantaneously without any additional efforts of data integration and other associated overheads

An MDM tool with the characteristics mentioned above helps alleviate all the master data concerns cost-effectively and efficiently. However, the reality is that many such tools fall far short of achieving that ideal. And many business environments do not allow such theoretical applications to take root in their organizations.

How should Organizations leverage the powerful MDM concepts without being hit by the downsides of the MDM tools? Organizations have to rely on a more competent variation of deploying the MDM tool in the absence of an ideal MDM solution. A mission-critical application such as an ERP system must derive the benefit. Such solutions deliver required master data with zero latency, thus eliminating wastes in the information supply chain. The discipline of Application Data Management (ADM) helps provide the potential of MDM.

Application Data Management (ADM): A Refined Form of MDM

Application Data Management (ADM) is a discipline akin to MDM but with a difference. In ADM, the scope under consideration is the specific application (or set of applications) for master data governance.

ADM serves as a quality staging area for the master data needs of the specific application. In doing so, it functions as a decoupling point between the upstream data creation activities and the various downstream data consumers, thus maintaining clear segregation of duties between data provisioning and consumption. The decoupling of Information enables all quality checks and balances to be defined centrally as business metadata, which is then implemented consistently for all data records. The creation of centrally defined data removes the burden of trust on master data from the consumers on the consumption end. It eliminates the need to build unnecessary data validations before using them in the member application's functions.

Information de-coupling point

Apart from providing a generic MDM tool's data governance features, an ADM tool's chief advantage is that it is pre-integrated to downstream consuming applications such as ERP. It seamlessly delivers accurate Information to them, thereby eliminating latency in provisioning high-quality data to desired consumers.

ADMs' added dimension is applicable for all data entities used in the business – both master data and transactional entities such as Invoices, Orders, and Requisitions. ADM is the umbrella under which you manage all Data across its lifecycle of usage in the Organization.

Thus, ADM stays true to the spirit of MDM when it comes to managing master data for a given application, and at the same time, ADM enhances MDM by making specific features available in the tools.

Comparative Assessment of MDM and ADM

While both MDM and ADM support similar foundational concepts to manage the lifecycle of master data, the differences between them become visible when one observes the capabilities of the tools currently available in the market. The following table provides an assessment across different dimensions of the MDM discipline and its refined version ADM.

| Dimension | MDM | ADM |

|---|---|---|

| Scope of usage | Typically aims to become the supplier of high-quality master data for several applications of the Organization. | Tailor-made to deliver high-quality master data to specific mission-critical applications. |

| Scope of data | Master data across multiple domains such as Product, Customer, Location, Asset, etc., across their entire lifecycle of usage. | Covers both master data as well as other transactional entities used in the applications. |

| Data Authority & control | In many implementations, MDM and current applications both continue to maintain parts of master data. As more applications are involved, it becomes a maintenance nightmare to ensure the correct application of business and logical rules for data management consistently across all the applications and the MDM system. | 100% authoring of all master data attributes and relationships that are used by the specific applications. Member applications become consumers of master data, without any concerns for the quality of data being supplied. Data Governance is effectively enforced because of single-source authoring. |

| Survivorship | Typically is maintained only in the MDM and serves as a reference for the golden record in case of conflict in member applications | Goes beyond the golden record and also reflects other business documents such as invoices, sales orders, and contracts with the right account information |

| Data provisioning | Different applications impose different latencies in the data consumption process as per their operating characteristics. MDM system has to support multiple modes of data consumption such as Batch, near real-time, data feeds, etc., using costly data integration tools. MDM system has to be architected to handle various possibilities. | Pre-built integration with the intended consuming applications means that all master data is delivered for instantaneous usage without any overheads of data transformation and integration. |

| When best to use | When shared master data has to be harmonized across multiple applications which cannot be changed – at least in the short term - easily to work under a single Ownership model of master data. True Enterprise MDM is a very long journey! | When an organization runs more than 80% of its operations on a mission-critical application system such as ERP and needs to get master data for this system absolutely right at a very low cost, without much time to waste. |

Power of Triniti MDM

Triniti's MDM with its ADM extensions are the ideal solutions to all your master data needs. While it fulfills your needs, it does so economically both for software and implementation.